Tools for recruitment and selection

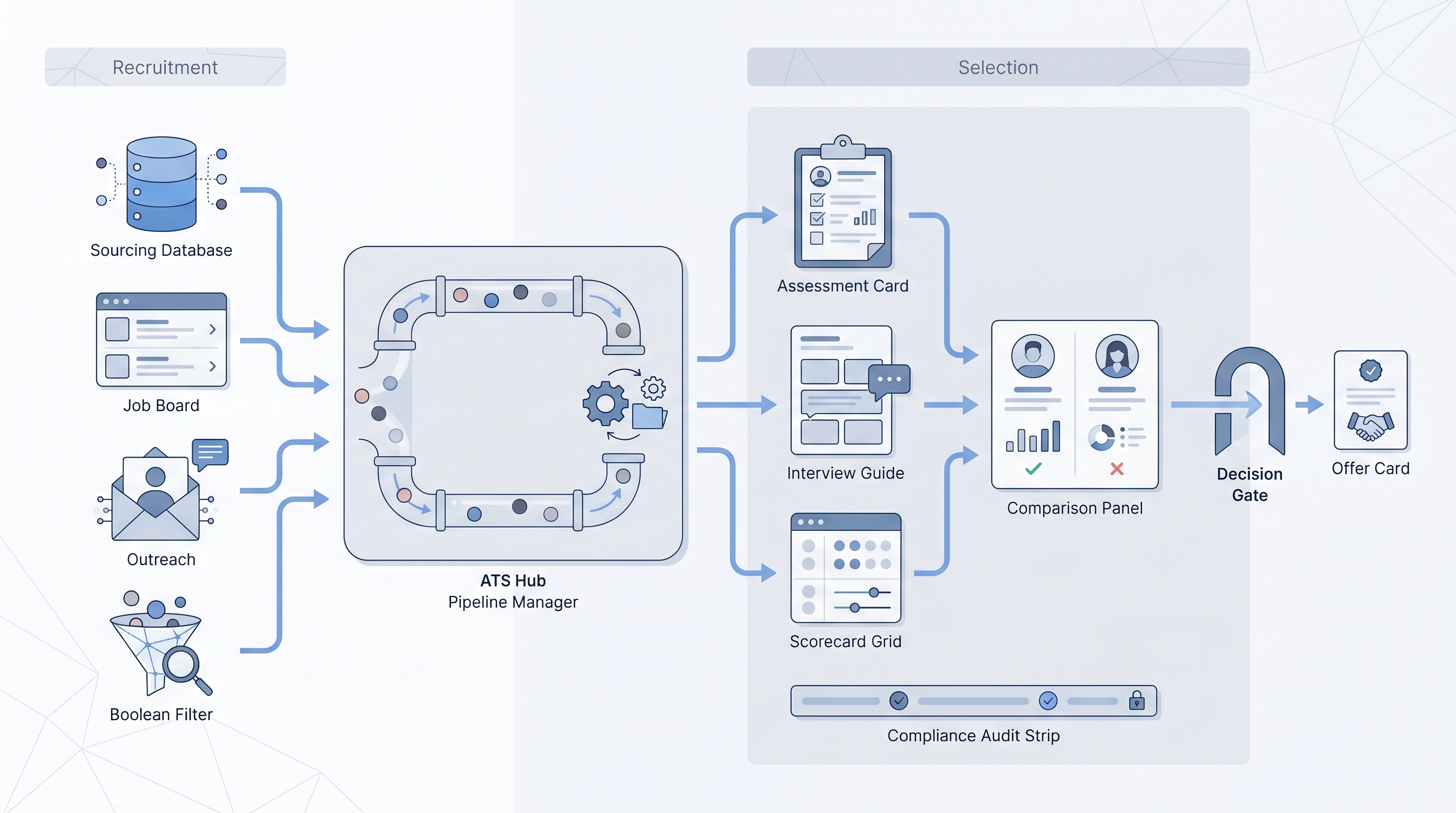

The combined toolkit that supports both phases of hiring: recruitment tools (ATS, job boards, sourcing platforms, outreach automation) that fill the pipeline, and selection tools (assessments, structured interview guides, scorecards, video interview platforms) that evaluate and advance candidates toward an offer.

Michal Juhas · Last reviewed May 15, 2026

What are tools for recruitment and selection?

Tools for recruitment and selection span two connected phases of hiring. Recruitment tools fill the pipeline: ATS platforms, job boards, sourcing databases, outreach automation, and Boolean or semantic search. Selection tools empty it properly: skills assessments, cognitive and psychometric tests, structured interview guides, scorecards, video interview platforms, and comparison dashboards.

The ATS typically sits in the middle, receiving candidates from recruitment channels and routing them through selection stages. That handoff point is where most integration gaps, compliance gaps, and process inconsistencies appear in practice.

In practice

- A sourcing lead at a scale-up described their hiring stack as two separate worlds: the recruiter lived in a sourcing tool and an outreach platform, while the hiring manager only saw the ATS. Neither side knew about the other until a candidate showed up twice. A shared candidate record with clear stage ownership fixed the duplicate. Adding a scorecard template fixed the debrief inconsistency.

- Practitioners in TA forums often describe the same split: "sourcing fills the funnel, selection empties it properly." The language is useful because it assigns ownership. If sourcing is producing candidates but the pipeline is slow or inconsistent, the bottleneck is usually on the selection side, not in the ads budget.

- An HRBP running a compliance audit found four active assessment vendors, two signed DPAs out of four, and no central record of which tool held candidate data for which roles. The problem was not the tools; it was the absence of a data owner across the combined stack.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need a shared vocabulary for vendor evaluations, compliance reviews, and hiring manager conversations. Skim the first section for the shared picture. Use the second when you are building or auditing your end-to-end stack.

Plain-language summary

- What it means for you: Recruitment tools find and attract candidates. Selection tools evaluate and advance them. Together they are the complete hiring toolkit, and they share a candidate record that must be managed as one data environment.

- How you would use it: Map your current pipeline in two columns. Left: how do candidates get in (job boards, sourcing, referrals, applications)? Right: how do they get evaluated (assessments, interview guides, scorecards, video tools)? Gaps in either column are where inconsistency and compliance risk appear.

- How to get started: Audit the tools your team uses today: which have a signed DPA, which write back to the ATS, and which are ad hoc tools nobody has formally reviewed. Fix the compliance side before buying anything new.

- When it is a good time: Before a significant hiring campaign, when inconsistent evaluation is producing unpredictable quality, or when legal asks for a documented selection rationale.

When you are running live reqs and tools

- What it means for you: The recruitment and selection stack has a combined data surface. Every vendor that touches candidate data adds a DPA obligation, a retention window, and a deletion mechanism to manage. Running both sides without a central data register creates audit risk.

- When it is a good time: Before enabling AI features on either side. Recruitment-side AI (resume screening, profile matching) and selection-side AI (video scoring, assessment scoring) each need a bias audit checklist, a human review gate, and a model version log before they run on live candidates.

- How to use it: Standardize the candidate record handoff. Define which ATS stage triggers the assessment invite, which stage requires a completed scorecard, and who owns exceptions when a candidate skips a step. Document the criteria for each stage before the search opens, not after the preferred candidate has already emerged.

- How to get started: Run a one-page stack inventory: each tool, its owner, its DPA status, its data retention period, and whether it integrates with the ATS. Share it with HR, legal, and IT before the next vendor renewal cycle.

- What to watch for: Vendors that bundle new AI scoring features in a standard release without opt-in, assessment results stored indefinitely with no deletion mechanism, and scoring criteria that drift between hiring managers because rubrics were never standardized across the two phases.

Where we talk about this

On AI with Michal live sessions, both phases come up together. The sourcing automation track covers the recruitment side: outreach tools, Boolean and semantic search, ATS integration, and where automation breaks. The AI in recruiting track covers the selection side: structured evaluation, scorecard design, AI scoring features, and how to review them before deploying in a live search. If you want both conversations in one room, start at Workshops and bring your current tool list and your biggest consistency pain point.

Around the web (opinions and rabbit holes)

Third-party creators move fast and tooling changes frequently. Treat these as starting points, not endorsements, and check anything before connecting candidate data to a new system.

YouTube

- Search "recruitment and selection tools for HR" on YouTube for practitioner walkthroughs of how end-to-end stacks are set up and where the handoffs between recruitment and selection break in practice. Filter by upload date.

- Search "ATS assessment integration recruiting" for technical walkthroughs of how tools connect and where candidate data falls out between systems when integration is missing.

- Search "structured interviewing scorecard hiring AI" for the selection side: how AI scoring is reviewed, when it helps, and what breaks when the panel relies on it alone.

- r/recruiting has recurring threads comparing specific tools and asking where the integration between sourcing and evaluation actually works versus what the vendor demos showed.

- r/TalentAcquisition covers TA leader conversations on stack consolidation, which vendors hold up under volume, and where compliance gaps surface in practice.

Quora

- What are the most commonly used tools in recruitment? collects practitioner answers across the full spectrum from sourcing to offer.

Recruitment tools versus selection tools

| Category | Recruitment tools | Selection tools |

|---|---|---|

| Primary job | Fill the pipeline | Evaluate and decide |

| Typical owner | Sourcer, recruiter | Recruiter, panel |

| Key risk | Data leakage, outreach compliance | Bias, validity gaps, DPA |

| AI risk | Matching bias, hallucinated profiles | Scoring bias, lack of explainability |

| ATS role | Receives applicants | Routes through stages |

| Compliance focus | GDPR first touch, consent | Adverse impact, retention |

Related on this site

- Glossary: Applicant tracking software, Selection tools for hiring, Assessment tools for recruitment and selection, Scorecard, Boolean search, Outbound talent sourcing, Adverse impact, AI bias audit, Human-in-the-loop (HITL), GDPR first-touch outreach, Remote hiring tools

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Membership: Become a member